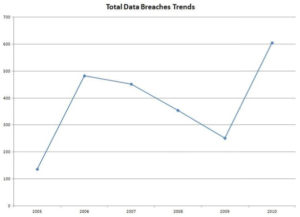

In the fable "The frog and the ox" by Aesop, a frog tries to appear bigger than it actually is by inflating itself more and more...till it bursts. Well, that's not the end of the story, but the frog's behaviour resembles a bit what Facebook has done with one of its metrics: the average time people stay in front of a video. This metric is a key for prospective ad space buyers, since it is a proxy for the average exposure of Facebook users to ads: the longer the exposure, the higher the value of the advertising space. The metric is particularly important for a company like Facebook, whose product is basically you and your time. The news broke after the advertising company Publicis warned its customers about the alleged miscalculation by Facebook, and was reported by the Wall Street Journal. Facebook itself admitted the "discrepancy" by posting a clarification note on the computation method. Continue reading

In the fable "The frog and the ox" by Aesop, a frog tries to appear bigger than it actually is by inflating itself more and more...till it bursts. Well, that's not the end of the story, but the frog's behaviour resembles a bit what Facebook has done with one of its metrics: the average time people stay in front of a video. This metric is a key for prospective ad space buyers, since it is a proxy for the average exposure of Facebook users to ads: the longer the exposure, the higher the value of the advertising space. The metric is particularly important for a company like Facebook, whose product is basically you and your time. The news broke after the advertising company Publicis warned its customers about the alleged miscalculation by Facebook, and was reported by the Wall Street Journal. Facebook itself admitted the "discrepancy" by posting a clarification note on the computation method. Continue reading

Welcome

Hi! Welcome to my blog. I'm a Full Professor of Computer Science at the LUMSA University in Rome. Here you'll find info on my research and professional activities. My present focus is on network and service economics and, more broadly, whatever lies at the intersection of economics and computer science. I hope you'll share my interests. Do not hesitate to drop me a line.

-

Recent Posts

Categories

Maurizio Naldi

Maurizio Naldi